Right Insights. Right Decisions.

Business intelligence provides immediate, actionable business data making

intelligent business

decisions simple.

See and understand your business easily with Perfect BI Insight. Boost ROI.

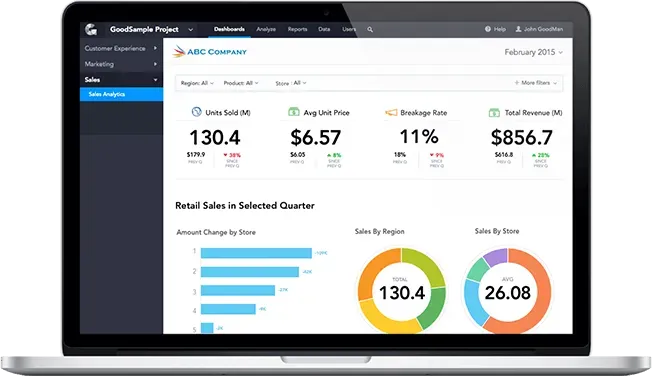

Report and Dashboard Designs

Connect to one of our solution experts!

-

Standard

Reporting. -

Strategic

Dashboards. -

Analytic Dashboards.

-

Operational Dashboards.

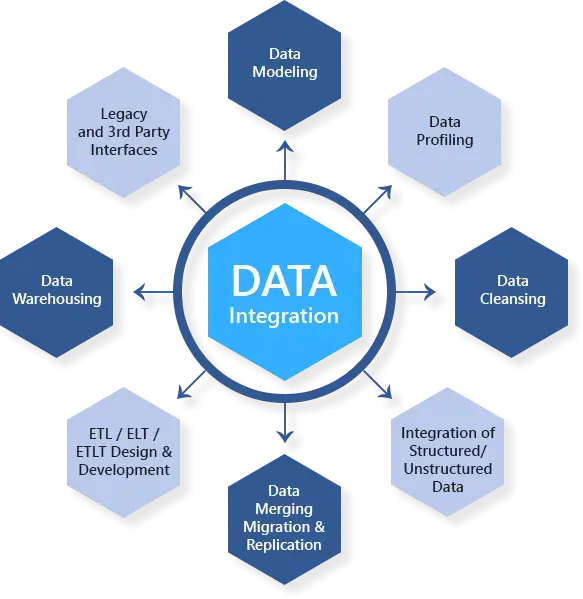

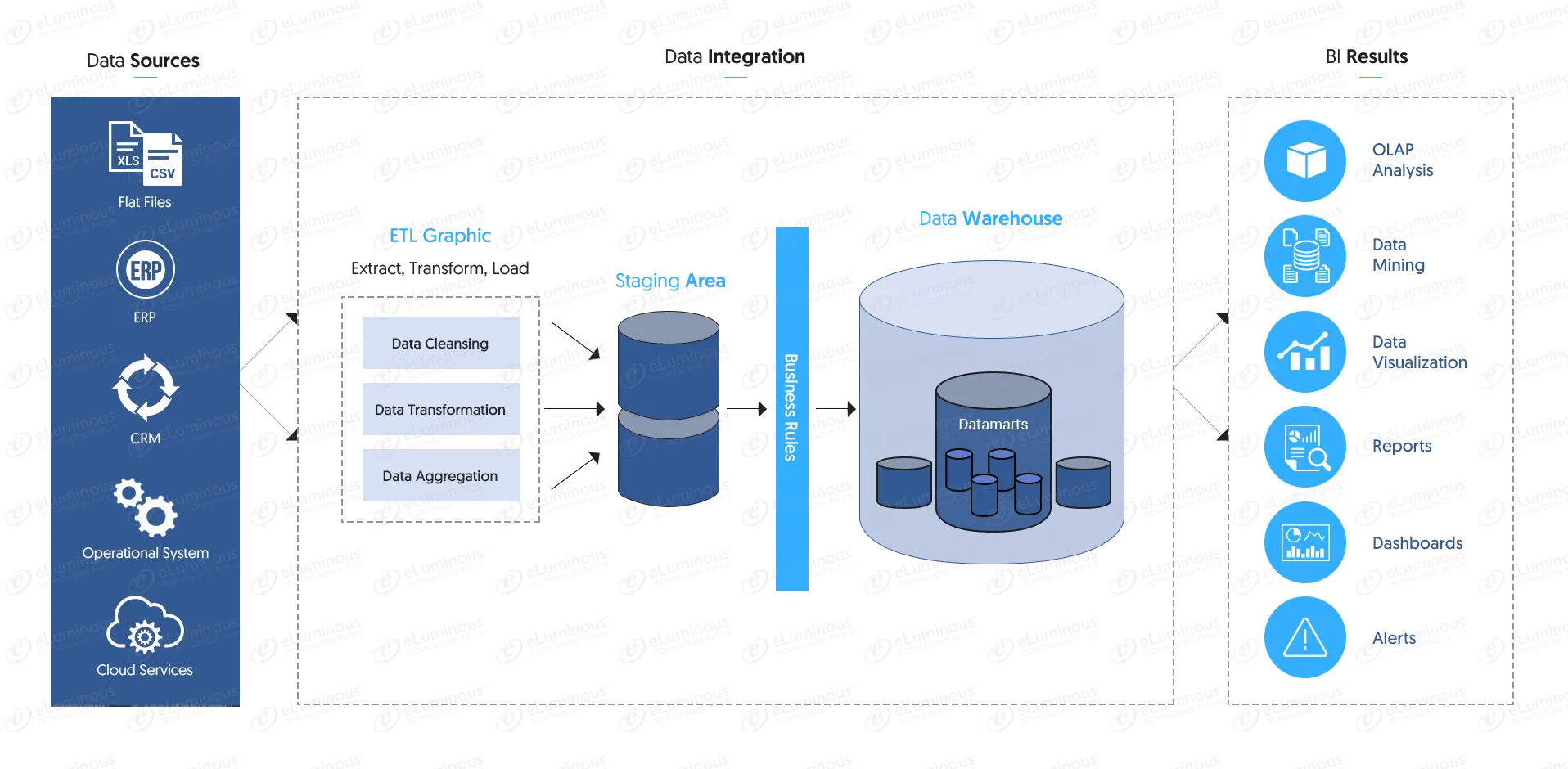

Data Integration

Merge Complex Data From Various Sources Into One Powerful Platform.

Key Offerings

- Convert raw data to clear business insights.

- Alert notifications for leading and lagging indicators, emergencies.

- Build Data Pipelines in Minutes. Any input, any scale with zero latency

- Complete ETL (Extract, Transform, Load) Development.

- Data Warehousing.

-

Pluck data from a range of sources like.

- Extract data from a wide range of sources:

- CSV

- Database Files:

- MySQL

- Salesforce

- Google Sheets

Need to build a datawarehousing platform ?

Work with our specialists

Get Started Now

OLAP Cube Designs

eLuminous will design multi-dimensional data views empowering planning, problem solving and decision making for your business activities.

Data Integration

Merge Complex Data From Various Sources Into One Powerful Platform.

Key Offerings

- Self Service BI.

- Fast access to real-time business insights.

- High performance database designs.

- Star Schema design – Measurable business process insights

- Pivot Table design – Summary data from other data locations

Our Process

eLuminous provides experienced BI data engineers and tools to review your business processes and transform them into intelligent and actionable BI dashboards.

Case Studies

Don't just take our word for it - see real results in our intriguing case studies.

Business Intelligence Solution for a Tyre Leader

Our client is a market leader with multinational distribution in the tyre (tire) industry. With 3300 retail locations across 30 countries, the client found it increasing difficult to accurately gather and analyze critical operating data to determine key operational efficiency and profitability metrics.

End-to-End Solutions – Clear Actionable Business Insights.

Merge Complex Data From Various Sources Into One Powerful Platform.

Business Intelligence Tools

Why us?

A truly next generation platform for enterprise BI and analytics

- eLuminous provides top-notch business intelligence design services via our team of highly experienced BI consultants and developers.

- Our services are structured and comprehensive. We analyze your entire business processes providing tailored business solutions which are appropriate and insightful. We provide insights to better on-time business decisions.

- All of our products and work are fully tested and cross verified ensuring your solution will work the first time around.

- Ask about our 200% customer satisfaction!