Following the Data Integration Guide-Part I where we got to know what is it all about, challenges, techniques, Today I am going to share how we have done data integration practically for one of our USA based client. Let’s go through this detailed case study:

About Our Client:

Our client is having retails business in consumer electronics & Computer peripherals across 11+ countries, having 600+ franchisee outlets. The client is having a main headquarter in UAE and nation headquarter in respective countries.

Business Operations:

Each POS (Point of Sale) sales of electronic goods and they order these goods from national headquarter through an email or phone. National headquarter is responsible to deliver respective electronics goods from their warehouse to the POS in the minimum time period. Usually national headquarter deliver goods from the warehouse which is nearer to the respective POS.

Each POS is having its own I.T (Information Technology) System to maintain sales orders like SAP, Salesforce, SugarCRM, Custom developed ERP/CRMs, also some of the POS maintains their sales orders in CSV, Excel or Word Document. POS send sales data to national headquarters as per their convenience and data formats like CSV, Excel, Text, PDF, Word document etc.

Here main challenge comes for national headquarter to take different data formats of sales orders to merge into one Excel as per POS and send it to the main headquarter on the last day of each month. Each national headquarter has appointed 2 dedicated man powers to do these data integration. So, if we consider 2 people per country then for 11 countries it comes to 22 people to work on data collection and sorting. Even doing that many human errors come in Excel data, which main headquarter receives from 11+ nation headquarters. And many iterations have to do to correct those errors. Further doing so human resources are not confident about final data collected and reports generated based on that. ‘C’ level people are always doubtful about these data and because of that they are not able to take any concrete business decisions or could not do any forecasting for overall business sales or particular product sale.

We have done data integration for this client in below 3 phases: –

1) Design

2) Implementation

3) Testing

Design:

Analysis of the requirements

- Why data integration is needed?

Answer:

Client wants to have centralized data storage from where he can get real insights of his business and can take business decisions on a real-time basis.

- What are the objectives and/or deliverables?

Answer:

Below is the objectives and/or deliverables:

- To have a centralized database

- Need standard Reports & Dashboards which will give a bird-eye view of the overall business revenue and operations.

- Self-service B.I (OLAP cubes for sales analysis)

- What will be the formats of data input?

Answer:

Below are the formats of data input

- CSV file

- Excel file

- Word Document file

- Text file

- ERP system

- CRP System

- Is all the data available to fulfill the requirements?

Answer:

Yes, all the data is available.

- After analysis of data, we came to know that every POS send data/figures of the current month along with last month, hence we create a new business rule to avoid duplication of data.

- The study showed that input data format changes as per POS requirement which causes the problem in ETL and it stop the execution process. To solve this we have created a new business rule for data integration and dynamic ETL which will take column names dynamically and compare with the master table. In this case, all mapping and business logic run dynamically as per input files of POS and country.

Analysis of the source systems

- What type of approach do you use for data loading?

Answer:

We have used Incremental extract approach to read data from FTP location and source system, where data will generate on daily basis and newly generated data will only be integrated into the data warehouse.

- What is the required/available frequency of the extracts?

Answer

: We set up cron for data integration, it continuously checks FTP location and integrates file when it gets.

- What is the quality of the data?

Answer:

Quality of the data is very poor and need to transform data and make it valuable while loading it into the database.

- Is the documentation available?

Answer:

No document was available, we have created some documents like RUD (Requirement Understanding Document), Data fields mapping document, System Architecture document, System flow diagram etc…

- What are the data volumes being processed?

Answer:

Month’s data volume is up to 1 TB and sometimes it increases more than that.

- Who is the system owner?

Answer:

CEO of the company is the system owner.

Any other non-functional requirements

- What will be system response time?

Answer:

System should integrate all monthly data within an hour once it will be available on the server for processing and should send an email notification after processing data to system support engineer.

- What is the estimated number of concurrent users?

Answer:

There are 50+ estimated user including all country headquarters managers and main headquarter users.

- What will be data security policy?

Answer:

We have implemented a security policy as per GDPR law. We have made many server security tweaks on the server so that data will be secured on it and only necessary data will get exposed to respective users only.

- What will be data backup policy?

Answer:

On the server data, backup policies are set so that not a single data will get lost.

- What will be Maintenance and upgrade expenses?

Answer

: Maintenance & upgrade expenses are very low as compared to the client’s existing expenditure on more than 40+ human resources, it comes down to only 1 person’s salary.

Implementation:

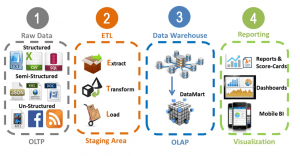

After design, Implementation phase comes. We have used Data Warehouse Approach for Data Integration implementation, In this approach, we create the multilevel architecture of Data warehouse and each level has its own rules of data integration and we need to follow this while integrating data. following are the level of Datawarehouse architecture –

- Staging

- DWH (Data ware Housing)

Below is the diagrammatic representation of the structure of Datawarehouse which we have implemented in this project :

-

OLTP

This includes all input data sources like CSV, EXCEL, TEXT, DOC.

-

Staging Area

We load all raw data into the staging area as it is and here data remains temporarily.

-

Data Warehouse

In the data warehouse, we load all input data from the staging area along with all business logic. In DWH data in the knowledge form. After loading data from staging to DWH, we delete all the temporary data from staging.

-

Reporting

Based on data warehouse we have created reports and dashboards.

Staging Area –

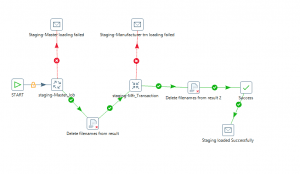

We have developed a web-based portal to get input data files from each and every POS, Each POS has its own access credential with which they can log in and upload sales data. our ETL reads these files and moves to the temporary location and from where we actually Extract, Transform and Loads data into the staging area. while integrating data we mainly focused on the following points –

- Mapping of input column with database structurer

- Understand values from the column and assign the proper data type

- Do datatype conversion if required (typecasting)

- Trim special and unwanted character

- Send Email alert after successfully loading as well as if the error occurs.

We are storing input data in the staging area for temporary data storage on the server.

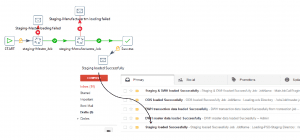

Following diagram shows Job load staging master and transaction transformation:

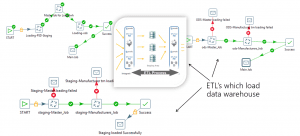

DWH (Data warehouse)

ETL read data from staging area to data warehouse while integrating this data we implement Business rules for each row e.g. calculate net price, list price, total discount, bonus, total tires sold out etc.

In DWH we have handled error logs and exceptions very smartly, we receive an email notification with attachment if any error or exception comes in the data integration process.

Testing:

After Implementation, Testing phase comes. In which we have tested end to end flow manually with some sample data, right from files coming to FTP, reading through ETL, going to staging and then data warehouse.

We have used follow points while testing: –

- We have tested the system by providing input files empty, with wrong input data, with different file types.

- We have made validation testing in which we have tested whether trimming has been done, whether special characters are getting processed or not, is their consistency in data which is getting stored like Upper and Lower case.

- Thoroughly checked data transformation. In which we have checked whether all ETL transformations are running as per developed sequence.

- Also tested whether input data type matches with data stored in the database.

- Primary key, foreign key, null, default value constraints, etc. are intact and data is processed as per those.

- The ACID properties of source and destination are validated.

- Have performed data loading performance tests.

- Data is going to increase over the time period. Therefore, Scalability test has been done by providing and testing huge input data (2TB).

Conclusion

We have achieved the main goal of the client to make data integration for the input data coming from 600+ electronic franchises across 11+ countries.

With this solution, the client has real-time figures for business analysis and he can take the right decision for business growth with confidence.

This solution also reduces a lot of time which was getting invested previously while doing manual data integration and sorting.

The client has done cost-cutting for human resources who were working in 11+ countries for data integration (22+ people). Now he is utilizing that talent in sales marketing and making business strategies/campaigns for respective countries.

Let me know if you would like to know more about our work in business intelligence services.