The Role of AI in Software Testing

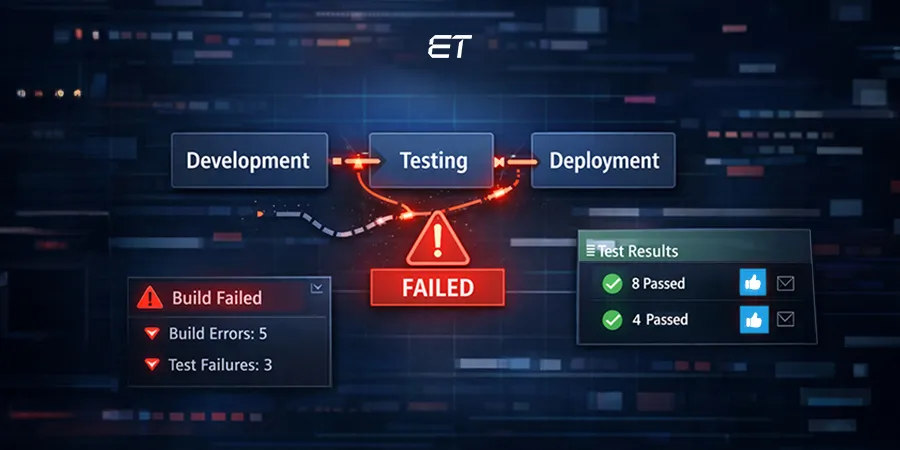

In enterprise software delivery, failure rarely comes from lack of effort; it comes from a lack of foresight. As systems grow more interconnected and release cycles compress, traditional testing struggles to provide the visibility and assurance that modern enterprises require.

AI in software testing addresses this gap by introducing predictive intelligence into quality engineering. It enables testing systems to understand application behaviour, learn from historical outcomes, and adapt automatically as code changes.

Rather than reacting to failures, enterprises can anticipate risk, stabilise automation, and protect release confidence at scale.

By reducing test fragility and optimising execution across CI/CD pipelines, AI allows quality teams to operate with greater precision and control.

The outcome is not just faster releases, but informed, risk-aware decisions that align testing with business priorities.

And while these gains are visible in daily testing tasks, the real transformation happens at a larger scale, across the entire Software Testing Life Cycle (STLC). So, how exactly does AI reshape STLC, and what should decision-makers like you know before adopting it?

What is AI in Software Testing?

AI-powered testing brings the power of learning, reasoning, and pattern recognition. It goes beyond rigid scripts to create test systems that adapt on their own, predict risks, and even recover when things break.

Now, you might be wondering: isn’t automation already supposed to solve these problems? That’s a fair question.

The reality is, traditional automation has its limits. It executes what it is told, but it does not learn from past runs, predict future risks, and it surely does not heal itself when things break. AI changes that equation.

Manual Software Testing Vs AI Software Testing

Here is a side-by-side comparison of AI in software testing vs. Manual/Traditional Automation to make the paradigm shift clear:

| Aspect | Manual / Traditional Automation | AI-Powered Testing |

| Speed | Slow, repetitive execution | Adaptive, learns in real time |

| Coverage | Limited to predefined test cases | Dynamically expands with app behavior |

| Accuracy | Human error-prone, fragile locators | Self-healing scripts, reduced false positives |

| Scalability | Difficult to scale across large, complex systems | Learns patterns, scales seamlessly |

| Maintenance | High effort when UIs change | Auto-fixes locators, drastically reducing overhead |

Did you know? Enterprises that adopt AI strategically see faster project delivery compared to traditional methods. Want to explore how?

Types Of AI Software Testing

AI in software testing is not a single tool but an application of several deep learning and machine learning disciplines. These methods focus on different aspects of the QA process to build a comprehensive quality strategy.

1. AI-Powered Functional Testing

This type focuses on ensuring that the software functions exactly as intended. AI is used for autonomous test generation and self-healing during test execution to maintain test stability and increase coverage.

2. AI-Powered Visual Testing (Computer Vision)

It goes beyond the code to validate the actual end-user experience. AI compares current screens pixel-by-pixel against baselines to detect visual regressions, layout issues, and broken user interfaces across different devices and browsers.

3. AI-Powered Performance Testing

AI is deployed to analyze historical performance data and logs to predict performance bottlenecks under load. It can simulate diverse and realistic load patterns that mimic actual user behavior, making load tests far more insightful.

4. AI for Test Data Management

Generative AI creates large volumes of synthetic, privacy-compliant test data that accurately mimics the statistical properties of production data, eliminating data bottlenecks and reducing compliance risks, especially in highly regulated industries like finance and healthcare.

5. AI for Test Prioritization and Optimization

Using sophisticated machine learning algorithms, AI analyzes code changes, developer commits, and past defect data to dynamically decide which subset of tests to run for a new build, ensuring the highest risk areas are tested first and minimizing overall regression time.

What Is The Role of AI In Software Testing?

The role of AI is to act as an intelligent augmentation layer over existing testing processes, transforming them from reactive fault detection to proactive risk prevention. Its core functions are to:

- Accelerate Release Cycles: By automating the brittle parts of script maintenance and running only the most critical tests, AI drastically reduces the testing bottleneck in CI/CD pipelines.

- Enhance Test Reliability: Self-healing scripts and intelligent locators stabilize test suites, eliminating the constant firefighting caused by flaky, broken tests.

- Maximize Coverage and Quality: AI can explore user paths and edge cases that human-written tests often miss, ensuring a more comprehensive test suite and lower defect leakage to production.

- Provide Predictive Insights: Shifting the focus from finding existing bugs to predicting where future bugs are likely to occur based on historical data and code complexity.

How AI in Software Testing Works

Leading organizations are investing in AI-driven quality engineering to accelerate release cycles, cut defect leakage, and improve test reliability at scale. But the critical question remains: how does AI in software testing actually work?

Machine Learning (ML)

Think of ML as that one QA team member who never forgets. It remembers every bug your system has ever had and uses that memory to predict where the next one might appear.

By analyzing code churn, complexity, and defect density, algorithms such as Random Forests and Neural Networks assign “risk scores” to different modules. The result? Your team knows exactly where to focus testing instead of spreading efforts thin.

Natural Language Processing (NLP)

How many times have business requirements been misunderstood in testing? NLP eliminates that pain. It takes plain English like “Login with an invalid password and check for the error message” and converts it into an executable test script.

Using techniques such as tokenization, intent recognition, and entity extraction, NLP lets business analysts and product managers create test cases directly, without writing code.

This bridges the gap between business and QA, saving both time and frustration.

Computer Vision

Automation might say “pass,” but your users still complain that a button is not visible or that the layout looks broken on mobile. That is where computer vision steps in.

By using CNNs and image-diff algorithms, AI checks screens pixel by pixel across devices. It validates that it “performs correctly” for the end user. In other words, it tests the experience, not just the code.

Benefits Of AI In Software Testing

AI in testing delivers profound, measurable business benefits that extend far beyond the QA team, impacting cost, time-to-market, and customer satisfaction.

1. Drastically Reduced Test Maintenance Overhead

The single biggest drain on automation efforts is maintenance. AI’s self-healing capabilities, which automatically update element locators and adjust scripts to UI changes, can reduce maintenance efforts. This frees up highly-paid QA engineers to focus on higher-value, strategic work.

2. Accelerated Release Velocity and Time-to-Market

By intelligently prioritizing and optimizing test suites, AI slashes the time needed for full regression testing from days to hours. This allows teams to meet the aggressive demands of continuous integration and continuous deployment (CI/CD), shipping new, reliable features faster than the competition.

3. Enhanced Defect Leakage Prevention

AI’s predictive defect analytics, which flag high-risk code modules and prioritize testing efforts accordingly, have been proven to significantly reduce the number of bugs that leak into production. This is a crucial defense that protects brand reputation and avoids costly post-release fixes.

4. Higher Test Coverage with Autonomous Generation

Generative AI can analyze application structure, user behavior, and code changes to automatically generate test cases, including complex edge cases and negative scenarios that human testers often overlook. This leads to a more comprehensive and robust test suite without scaling up headcount.

5. Data-Driven Decision Making

AI-generated reports move beyond simple pass/fail statuses. They provide root cause analysis, risk scores, and confidence levels for a release, grounding the QA process in objective data and empowering leaders to make faster, more informed decisions about deployment readiness.

Generative AI in software testing is changing how CTOs plan, build, and scale software in 2026. Curious to know what else you might be missing?

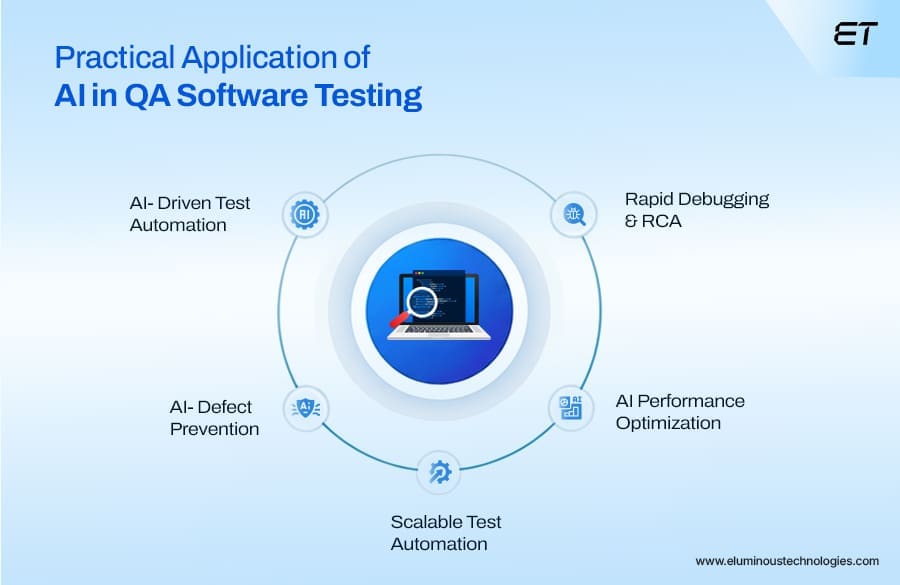

Key Use Cases and Applications of AI in Software Testing

The real value of AI in testing lies in its ability to drive business benefits.

Faster releases, reduced maintenance, higher coverage, and fewer defects in production all translate directly into cost savings and customer trust. Let’s explore where the biggest wins are happening.

Autonomous Test Case Generation

Traditional test design is time-consuming and often misses edge cases. With Generative AI in software testing, applications can be scanned for code changes, user flows, and behavioral patterns to automatically generate comprehensive test scenarios.

The benefit? Coverage expands without adding headcount. In fact, enterprises using autonomous generation report up to a 30% boost in test coverage within a single release cycle. It helps them catch risks earlier and improve release confidence.

Self-Healing Test Scripts

One of the biggest frustrations in QA is script maintenance. A minor UI change, like a renamed button, can break dozens of tests. Generative AI in software testing and self-healing scripts eliminates this overhead by detecting locator changes in real time and updating them automatically.

Organizations adopting this approach have seen maintenance efforts drop by 70–80%, freeing teams to focus on strategic testing rather than firefighting broken scripts.

Intelligent Test Prioritization and Optimization

Running every test for every build is simply unsustainable in fast-moving CI/CD pipelines. AI in software testing changes the equation by analyzing defect history, code diffs, and module risk scores to decide which tests truly matter.

Instead of spending two days on regression, teams can finish in less than a day while still catching the same number of defects. The advantage is clear: reduced cycle times, accelerated deployments, and no compromise on quality.

Predictive Defect Analytics

Imagine being able to fix a bug before it ever surfaces in testing. That is what predictive analytics brings to QA. By mining commit logs, developer activity, and historical defects, AI highlights modules with the highest likelihood of failure.

This proactive approach has helped organizations cut post-production defects by more than 28%, shifting QA from a reactive safety net into a forward-looking risk prevention strategy.

Synthetic Test Data Generation

Access to realistic, compliant test data has always been a bottleneck. AI resolves this by generating large-scale, privacy-compliant synthetic datasets that mimic production without exposing sensitive information.

For industries like healthcare and finance, this means no more waiting on masked datasets or risking compliance violations. Teams gain agility, with test cycles accelerated simply because the right data is always available when needed.

AI in staff augmentation is changing the way companies hire, match, and scale teams. Curious to see how it can reshape your hiring and delivery strategy?

Top 5 AI Testing Tools

The landscape of AI testing tools is rapidly expanding. Here are five of the leading platforms that demonstrate the power of AI in testing today:

| Rank | Tool Name | Key AI Feature Focus | Core Benefit |

| 1 | ACCELQ Autopilot | Codeless enterprise automation | Autonomous test design |

| 2 | Testsigma | NLP-based accessible testing | Plain English → executable tests |

| 3 | Functionize | Self-maintaining test automation | NLP test generation + self-healing |

| 4 | Mabl | Cloud-native DevOps testing | Auto-healing + predictive performance |

| 5 | Applitools | Visual AI testing | Computer-vision visual validation |

The best AI software testing tools integrate seamlessly into CI/CD pipelines and align with enterprise-scale needs.

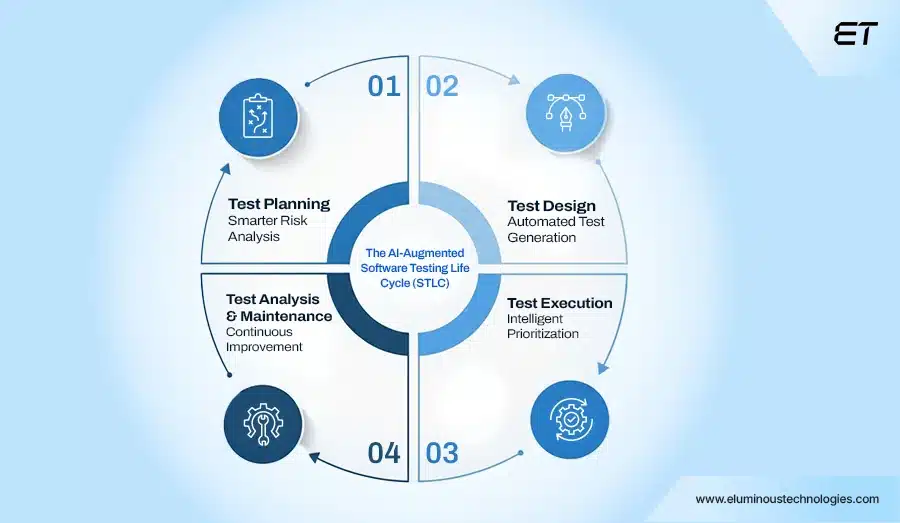

The AI-Augmented Software Testing Life Cycle (STLC)

Every software team knows the cycle: plan, design, execute, maintain. But in practice, this cycle slows down under pressure.

Why? Because too many test cases = too much noise, and not enough focus.

AI redefines the Software Testing Life Cycle by shifting it from a manual, reactive process to an intelligent, outcome-driven framework.

1. Test Planning – Smarter Risk Analysis

Instead of relying on assumptions, generative AI in software testing shows you exactly where to focus first.

- Identifies high-risk modules → based on historical defects and code complexity.

- Provides accurate effort & timeline estimations using predictive analytics.

- Ensures business-critical coverage instead of blanket testing.

In short, AI makes planning specific and actionable by prioritizing high-risk modules, aligning timelines to real data, and guaranteeing that critical business functions never go untested.

2. Test Design – Automated Test Generation

What usually takes weeks to design, AI in software test automation can create in hours with better accuracy.

- Automatically generates test cases from requirements.

- Creates synthetic yet compliant datasets for realistic testing.

- Removes redundancies → while ensuring strong edge case coverage.

This means that test design delivers higher coverage in less time, with AI producing compliant datasets, reducing human error, and uncovering edge cases that traditional methods often miss.

3. Test Execution – Intelligent Prioritization

AI makes sure the right tests run at the right time, cutting wasted effort.

- Runs critical tests first based on commit history and risks.

- Balances workloads across multiple environments for speed.

- Dynamically adapts when failures are environment-driven.

The result is measurable acceleration. Which means faster execution cycles, optimized resource use across environments, and defect detection aligned with real risk rather than blind sequencing.

4. Test Analysis & Maintenance – Continuous Improvement

Instead of testers chasing broken scripts, AI in software test automation quietly keeps the suite healthy.

- Performs root cause analysis → linking logs, stack traces, and commits.

- Applies self-healing automation to update scripts automatically.

- Predicts defect clusters early, reducing production risks.

This transforms analysis into proactive risk prevention — with AI-driven scripts in real-time, connecting failures back to their source, and forecasting defect clusters before they reach production.

Agentic AI frameworks simplify how enterprises build autonomous agents and speed up complex workflows.

What Are The Various Methods For AI-Based Software Test Automation?

1. Model-Based Testing (MBT) with AI

AI-driven Model-Based Testing builds an executable behavioural model of the application by analysing UI flows, API contracts, state transitions, and historical user journeys. The model continuously evolves as the application changes.

Specific implementation details:

- Uses graph-based state modelling to map screens, APIs, and workflows

- Automatically regenerates test paths when UI elements or logic change

- Prioritises high-risk paths using usage analytics and defect history

Example:

In a SaaS CRM platform, AI models lead creation, pipeline movement, role-based permissions, and approval workflows. When a new approval rule is introduced, the model automatically updates and regenerates tests covering permission conflicts and edge-case transitions.

2. Generative Adversarial Networks (GANs) for Test Data Generation

GANs generate statistically accurate synthetic datasets that preserve real-world patterns, such as distributions, correlations, and anomalies, while avoiding the exposure of sensitive information.

Specific implementation details:

Mimics production-level data skew and outliers

Supports structured (databases) and semi-structured (JSON, logs) data

Enables data variation for negative and boundary testing

Example:

For a fintech application, GANs generate transaction sequences with realistic fraud patterns, failed settlements, and currency conversions, enabling accurate testing of fraud detection engines and risk models.

3. Deep Learning for Root Cause Analysis (RCA)

Deep learning models correlate failures across test automation results, CI/CD pipelines, infrastructure metrics, and source control systems to identify the true source of defects.

Specific implementation details:

Uses neural networks to detect failure patterns across builds

Maps test failures to specific code commits or configuration changes

Continuously improves accuracy using historical defect data

Example:

In a microservices architecture, AI detects that test failures occur only when a specific service is deployed, along with increased memory usage, identifying a memory leak introduced in the latest container image.

4. Reinforcement Learning (RL) for Intelligent Exploratory Testing

Reinforcement Learning enables AI agents to autonomously explore applications without predefined scripts, learning optimal paths to uncover defects.

Specific implementation details:

- Reward functions prioritise crashes, unhandled exceptions, and new states

- Learns high-risk navigation paths over multiple test runs

- Simulates unpredictable real-user behaviour at scale

Example:

In a mobile wallet app, the RL agent learns that rapid switching between biometric authentication and PIN entry causes session corruption, an issue missed by scripted test cases.

5. Natural Language Processing (NLP) for Test Case Authoring

NLP enables the conversion of business requirements, user stories, and acceptance criteria into executable test cases.

Specific implementation details:

- Parses Gherkin, user stories, and plain English requirements

- Maps intent to UI elements, APIs, and validation rules

- Reduces dependency on test scripting expertise

Example:

A Jira story stating “Ensure failed login attempts lock the account after five tries” is automatically translated into UI actions, backend validations, and security checks.

How Can AI Optimize Software Testing?

Optimization is the key business outcome of AI adoption. AI optimizes testing by injecting data and intelligence into every phase, moving the function from a cost center to a strategic asset.

- Optimizing Test Suite Size: AI in software testing analyzes the execution history of every test case, identifying redundant, overlapping, or low-value tests. It recommends which tests can be retired or consolidated, leading to a leaner, faster, and more maintainable test suite.

- Optimizing Resource Allocation: By quantifying risk in real-time, AI tells teams exactly where to deploy human testers (for complex exploratory work) and where to deploy automated tests (for high-risk regression). This intelligent allocation ensures maximum impact for every hour spent.

- Optimizing Feedback Loops: AI integrates directly into the CI/CD pipeline, providing immediate, risk-prioritized feedback to developers upon every commit. This rapid feedback loop allows developers to address bugs within minutes of their introduction, dramatically reducing the cost of fixing them.

- Optimizing Cross-Browser/Device Coverage: Computer Vision and ML models can intelligently group similar browser/device configurations and test one of them thoroughly, then use visual validation to quickly confirm consistency across the others. This ensures broad coverage without running a redundant, full test suite on every single permutation.

The Future of AI in Testing

The trajectory of AI in software testing points toward full autonomy and end-to-end quality orchestration. The future will be defined by Agentic AI frameworks where:

1. Autonomous Quality Agents: AI in software testing will evolve into autonomous agents capable of perceiving a requirement, generating the entire test plan, creating the data, executing the tests, reporting the bug, and even suggesting the code fix, all without explicit, step-by-step human scripting.

2. Predictive Quality Gates: The simple “pass/fail” will be replaced by a live, predictive “Release Confidence Score.” This ML-powered score will aggregate data from production monitoring, historical defect rates, and test execution results to provide an objective, continuous measure of system health.

3. Self-Evolving Test Suites: Test cases will not just heal themselves; they will evolve. As the application’s user base and behavior change, AI in software testing will automatically refactor, create, and retire test cases to keep the test suite aligned with real-world business risks and usage patterns.

4. Codeless Test Design for All: NLP capabilities will advance to allow anyone in the business, from product managers to marketing specialists, to define complex test scenarios simply by describing them in plain language, truly democratizing the testing process.

Wrapping Up

For enterprises, the role of AI in software testing is no longer optional but is fast becoming essential. By enabling autonomous test creation, self-healing scripts, and predictive defect analytics, AI transforms QA into a strategic lever for business growth.

Yet, adopting AI in software testing is not just about deploying advanced technology; it requires a strategic shift. Forward-looking enterprises are already redefining quality assurance as a competitive differentiator, while those clinging to traditional methods risk slower delivery and higher defect leakage.

The real question is, will your enterprise take the lead in adopting intelligent QA, or will it be left reacting while others set the standard?

Overwhelmed by manual processes? Automate smarter with enterprise-grade AI development