RAG vs Fine-Tuning: Which Strategy Scales Your AI Effectively?

Your AI assistant is live, but it’s either pulling outdated information or producing inconsistent responses. The real question is: do you fix it with real-time data access, or retraining the model itself?

There are two primary approaches. The first is Retrieval-Augmented Generation (RAG), an architecture that improves LLM outputs by retrieving relevant, up-to-date information from external sources at runtime. The second is fine-tuning, a machine learning technique that adapts a pre-trained model using domain-specific data to improve its behavior and outputs.

Both approaches are valid, but the real decision comes down to trade-offs across cost, latency, control, and maintenance. Understanding these differences is critical to making the right call.

In this blog, we’ll break down their use cases, explore when a hybrid approach makes sense, and outline the key factors that should guide your decision whether that’s RAG vs fine tuning, or a combination of both.

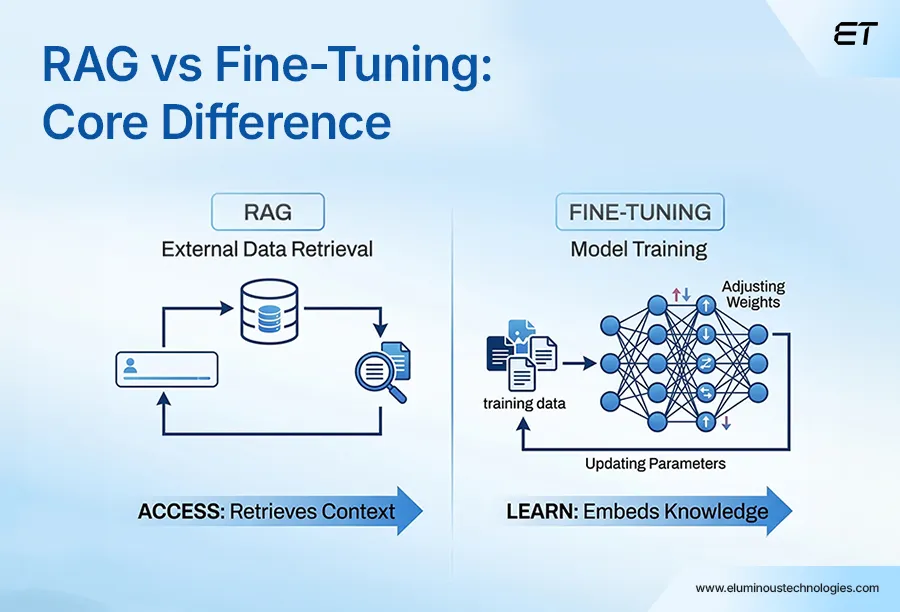

RAG vs Fine Tuning: Key Strategic Differences

RAG and fine-tuning are both powerful approaches for working with LLMs, but they solve different problems. Choosing the right one directly impacts development speed, operational costs, and overall ROI.

RAG changes what model can access. On the other hand, Fine Tuning is the process of changing what the model knows.

Fine-tuning refers to modifying a model’s core. This means adjusting the internal weights by using your data. The fine-tuned knowledge acquired at this point is baked into the model.

RAG leaves the model intact and instead connects to external data at the time of the query. It adds a layer that can pull relevant information for the query and generate an informed response.

Fine-tuning modifies domain specific data and is responsible for model’s internal behavior. In contrast, RAG augments the model with external data, which is retrieved at the time of query.

They both fundamentally work in different ways, with cost and maintenance.

Check out our detailed comparison of MCP vs RAG and find out how you can combine both in your business.

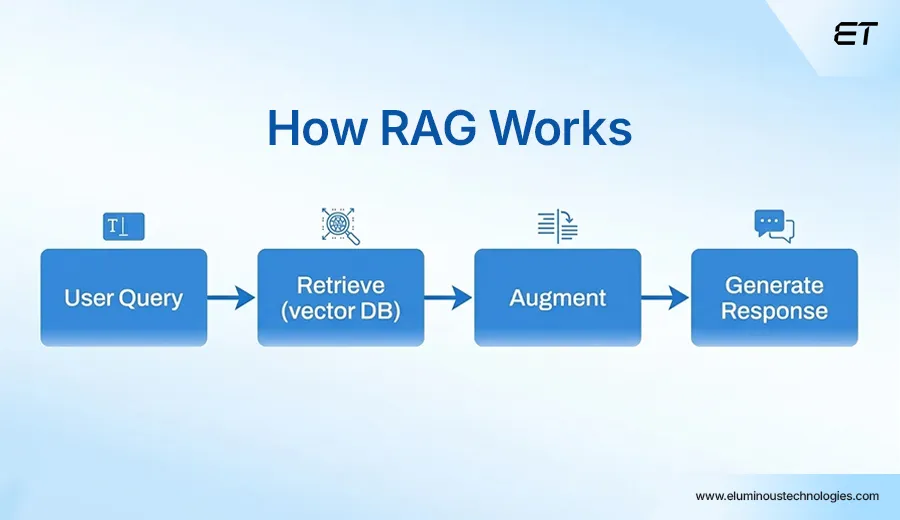

RAG: Definition, Use Cases, Business Value

Retrieval Augmented Generation retrieves data from proprietary data in vector databases and provides the most relevant document that is accurate to improve the response of large language models. Its primary goal is to extract relevant information from the database, augment it, and add context to the prompt in real time.

Let’s understand the process with an example.

A standard employee at a financial services firm is seeking information on a recently passed bill. He prompts the LLM to gather information available on the topic.

These are three steps a RAG follows every time:

Retrieve – The query received by the LLM is transformed and semantically matched to the indexed knowledge base. The closest matching data is collected and sent back.

Augment – The system injects retrieved content into the prompt to provide the model with the requested context.

Generate – The model now has all the information and responds with both query intent and retrieved data evidence. Here, the model uses the retrieved data and the provided instructions to produce context-rich responses in knowledge and data.

For organizations that deal with frequently changing information, internal regulatory updates, changing policies, and live product data, RAG is the architecture that helps to keep the information updated without the cost and delay of retraining.

RAG data is designed to be able to provide traceable outputs where the source of data is transparent.

In simpler words, updating the dataset is enough. You can learn more about RAG in our walkthrough, which covers every component.

Use Cases

RAG enhances AI systems by connecting them to external data sources such as internal documents, databases, or APIs and retrieving relevant information at query time. This allows the model to generate responses grounded in up-to-date, context-specific data rather than relying solely on its pre-trained knowledge.

1. Building Intelligent Customer Service Chatbots: RAG enables chatbots to accurately answer information by retrieving data from support documentation, FAQs, and product manuals. These support assistants and sales bots are often prompted about real-time queries and are required to access data from inventory and pricing without daily retraining.

2. Document Summarization & Search: With access to external data in real time, RAG enables professionals to retrieve up-to-date information from internal and external sources. This supports workflows such as building market reports, analyzing trends, and evaluating company performance while the LLM assists in synthesizing and contextualizing the retrieved data for decision-making.

3. Enterprise Knowledge Management: An organization uses RAG to create search tools that give its employees up-to-date access to information on policies, IT, or HR. As the nature of this information changes frequently, RAG helps maintain momentum by answering updated queries.

Advantages

RAG simply extends the potential of LLMs. They are structured units with volumes of data and billions of parameters to generate a response. RAG here adds accuracy by retrieving specific data from external sources, without the need to retrain the model.

1. Cost-Effective: RAG is the cheaper option than fine-tuning the entire model. It simply allows for the retrieval of data from datasets and provides it to the prompted query. The new data is introduced to the LLM, making it broadly usable and accessible.

2. Enhances User Trust: RAG allows LLM to answer in real-time with accuracy. This could include the source of information, citations, and references. Users have access to transparency in information provided and thus can themselves look for further clarification and details if required.

3. Lowers Hallucination Rates: The data sourced via RAG does not solely depend on pre-trained parametric memory. It grounds AI by producing accurate and relevant output.

4. Access to real-time data: RAG does not modify or retrain the model; instead, it provides access to up-to-date information at query time by retrieving relevant data from external sources. Every response produced can be traced back to the source document. This matters in the regulated industry.

Constraints

RAG fixes a lot of common LLM issues, but it’s not a silver bullet. You’re essentially adding a retrieval layer, which comes with its own trade-offs.

- Retrieval Relevance Isn’t Guaranteed: RAG is only as good as what it retrieves. The system can pull documents that look related but miss the actual intent leading the model to generate answers that sound right but are only partially correct.

- Hallucinations Don’t Disappear: RAG reduces hallucinations, but doesn’t eliminate them. If the retrieved data is incomplete, outdated, or contradictory, the model will still try to fill in the gaps, sometimes confidently getting it wrong.

- Ongoing Data Maintenance: RAG isn’t a set-it-and-forget-it system. Your knowledge base needs regular updates like re-indexing, re-embedding, and pipeline tuning. If that slips, retrieval quality drops drastically.

Fine Tuning: Definition, Use Case, Business Value

Fine-tuning refers to adapting a pre-trained model to a specific task or domain by training it on curated data. It involves updating the model weights, so it learns domain-specific patterns, terminology, and response styles improving its performance for targeted use cases.

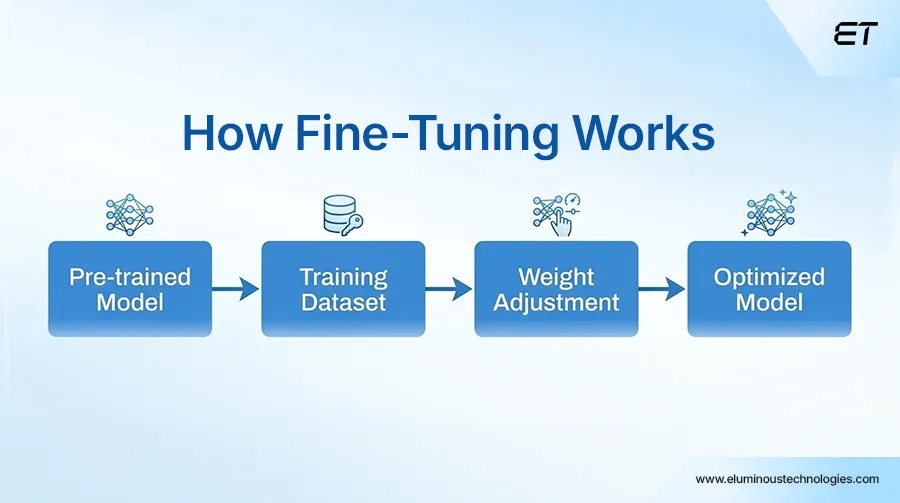

This is how it works:

- Selecting a Pre-Trained Model: The very first step is to choose a pre-trained model that already has a diverse data set.

- Loading the Data: This is where the model acquires specific features. These layers are fine-tuned and aligned to the new task.

- Evaluating the Output: At this point, one needs to evaluate the performance and further continue to adjust the learning rate. It can either need more layers or other modifications to reach the point where it performs optimally.

The quality of training data plays a crucial role in success. If your data is messy or incomplete, the model is going to acquire wrong information. Therefore, this method is not a shortcut, and it does cost money and time to make the required refinements.

Use Cases

Fine-tuning updates the specific domain; it has a behavioral depth that no amount of prompt engineering achieves with a general model. Here are cases where fine-tuning is an investment.

1. Scaling Brand Voice: An AI application ensures predictable output at scale, reducing variability and improving decision reliability. Here, the output needs to be identical from the brand’s tone, style, and voice. Fine-tuning helps in maintaining the consistency in formal corporate tone or executing a friendly customer support customized persona.

2. Executing Task with Precision: Fine-tuning ensures that models can perform specialized tasks such as classifying legal documents, providing code reviews aligned with the goal, and summarizing medical reports. This increases accuracy and reliability, as it is aligned with the domain, specifically building the model into a more trustworthy one.

3. Specializing Domains: Tailored models can understand financial, legal, and even medical terms. This means they adapt LLMs to specific industries, while improving accuracy and reducing hallucinations. The key use is structured data extraction that ensures understanding of specialized terminologies.

Advantages

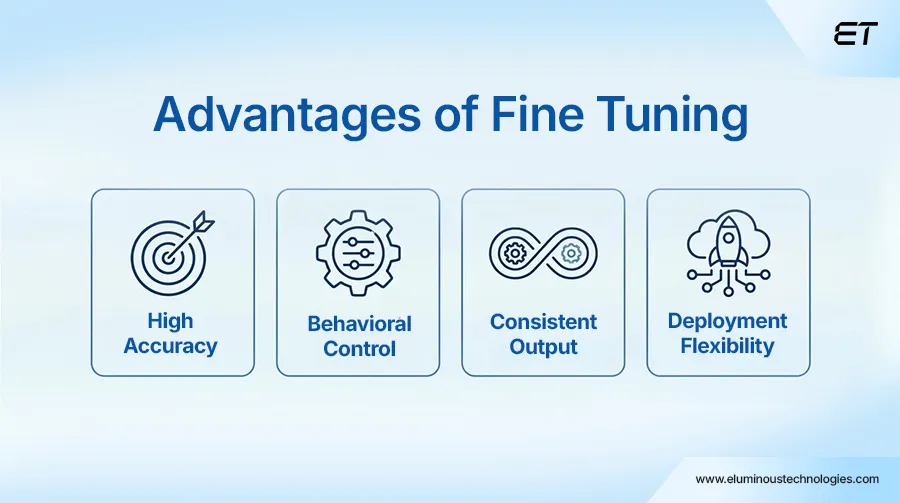

While Fine-tuning carries a tangible cost-profile, the playoff is measurable. It has clear strategic benefits and requires computing, ML expertise, and curation time.

1. Improves Performance: Fine-tuning improves task-specific performance by training the model on domain-relevant data. This leads to higher classification accuracy, more consistent responses, and better adherence to expected outputs.

2. Maintaining Tone and Style: Once fine-tuned, a model can begin to reflect brand guidelines and a specific voice, but the consistency of this behavior depends heavily on the quality, coverage, and consistency of the training data.

3. Deployment Flexibility: Fine-tuned models can run in isolated environments. This is achievable in air-gapped environments without relying on external data sources.

Fine-tuning contributes to transforming a normal AI model into a precise, specific, and aligned model that demonstrates the organization’s brand of voice and goals.

Limitations

Fine-tuning can deliver strong results, but it comes with clear trade-offs that need to be considered before investing at scale.

- Static Knowledge: A fine-tuned model only reflects the data it was trained on and does not automatically update with new information. As your domain evolves through regulatory changes, product updates, or new data, maintaining relevance requires periodic retraining, which becomes an ongoing operational effort.

- Time and Cost Overhead: Fine-tuning at scale can be resource-intensive, requiring GPU infrastructure, data preparation, and iteration cycles. While not all projects take months, the cost and time involved can increase significantly depending on model size and complexity.

- Execution Complexity: Effective fine-tuning requires expertise in data curation, evaluation, and model optimization. Poorly executed fine-tuning can degrade performance or introduce inconsistencies, making it critical to have the right processes and experience in place.

RAG vs Fine Tuning: A Practical Decision Framework

Both methods are built for different purposes and can achieve distinct goals. Before choosing the right approach, you should be aware of various factors and criteria that affect the real architectural decision.

| Dimension | Fine Tuning | RAG |

| Response Speed | Generally faster and more consistent, as responses are generated directly without external retrieval | Can be slower due to the retrieval and ranking step, with latency depending on system design and optimization |

| Maintenance | This requires periodic retraining cycles | This needs to keep up with the knowledge base and pipeline |

| Data Privacy | Moderate – data often gets absorbed into model weights | Strong – data is provided externally |

| Behavior Control | Strong control over behavior, tone, style, and task-specific responses | Limited behavior control; primarily relies on the base model while augmenting responses with external data |

| Knowledge Currency | Requires retraining update | It can provide real-time updates without retraining |

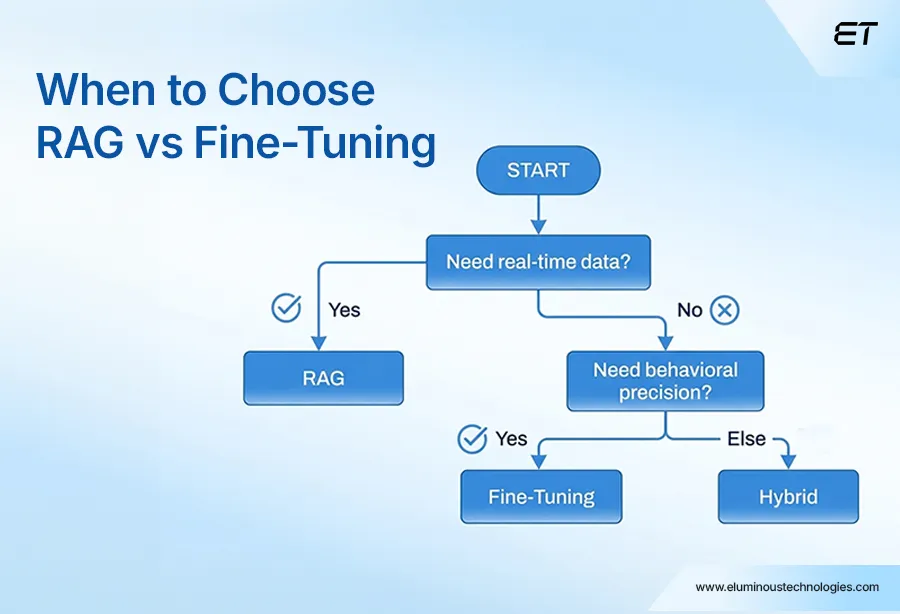

When to Choose RAG vs Fine-Tuning

Both RAG or fine tuning can level up your AI. But here’s where most teams get it wrong: they jump in before figuring out which one fits out with their problem.

The real value is choosing the one that aligns with what your AI needs to do.

Choose RAG if:

RAG makes sense when your data changes often, and you can’t afford to rely on static knowledge. Instead of expecting the model to know everything, it pulls in the latest information at query time, so responses stay fresh and relevant. Just keep in mind that freshness depends entirely on how well your retrieval system is set up and maintained.

For example, customer chatbots. They often need up-to-date information that is incorporated into the system instantly.

This can also be a good choice for organizations that deal with information that is crucial and needs to back up with citations, security, and reliability. The ability to trace answers to specific information is quite a need for certain organizations.

Choose Fine Tuning if:

Fine-tuning is a primary choice when your application requires specialized expertise and demanding requirements. This refers to demands from the AI model.

Fine-tuning is better suited for stable, domain-specific behavior. If your goal is to make the model follow a consistent tone, format, or decision pattern, fine-tuning works well. It’s especially useful for standardized tasks where consistency matters more than real-time knowledge updates.

The primary objective of fine-tuning is to achieve the outcomes specified by the following formats and specialized domain requirements. This could include drafting legal contracts or writing medical documents.

An important thing to note here is to differentiate the result on RAG vs fine tuning. RAG works with external resources, allowing information to be updated without interfering with the actual model. Fine-tuning works by updating the model’s learned parameters (weights) through additional training. Depending on the approach, this can involve adjusting the entire model or just a subset of parameters, but the goal is the same: to reshape how the model behaves based on your data.

Both have their own path to achieving results. The best choice will depend on your requirements and needs.

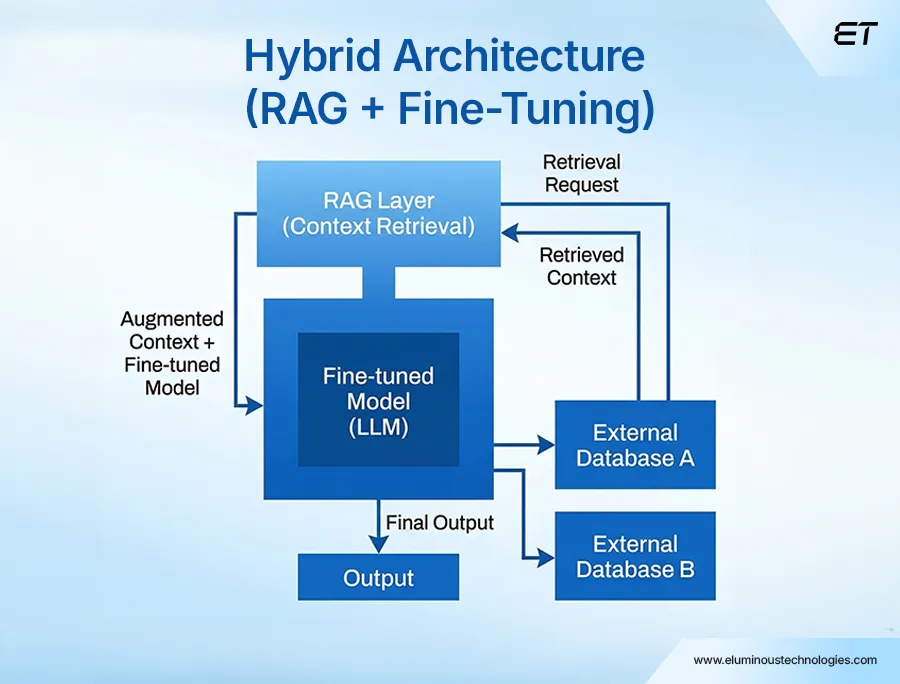

RAG vs Fine-Tuning: When to Combine Both

Whether it’s RAG or Fine Tuning, the most robust AI systems use both. It is a deliberate architecture.

Here is how it works in practice: First, you fine-tune a model for understanding your domain language, tone, style, reasoning patterns, and behaviors. This will lead you to achieve an internalized vocabulary of your own task patterns.

Then you can layer it with the RAG pipeline, so it answers the query just like you have trained it to with your own style. Combining RAG with fine-tuning helps you gain specialized model access, providing live updates and auditable information.

Example: Think of a medical firm that fine-tunes a model to get the tone, terminology, and reasoning just right, so every report sounds consistent and professional. Then they plug in a RAG layer to pull the latest updates from regulatory databases at query time. Now the model is working with fresh, real-world data.

That’s a sweet spot. Fine-tuning shapes how the model behaves, while RAG keeps what it says up to date. Put them together, and you get outputs that are both consistent and grounded in current information, exactly what high-stakes domains need.

Summing Up

When you put RAG vs fine tuning together, you’re assigning each to what it does best. Fine-tuning locks in how your model behaves: tone, structure, decision patterns. RAG handles what your model knows at any given moment by pulling in fresh, relevant data.

That’s why this combo shows up in serious, production-grade AI systems. You get consistency where it matters, and real-time accuracy where it counts, without overloading the model or constantly retraining it.

If you’re looking to implement this the right way, eLuminous Technologies brings hands-on expertise in building RAG-driven architectures, backed by a team of vetted AI developers. From designing retrieval pipelines to fine-tuning models for domain-specific use cases, we can help you move from experimentation to production with confidence.

Ready to make the decision? Our AI architect can help you map it out.